During one of my business travels recently, I met the Revenue Assurance & Fraud Management heads of few telco’s. There was one meeting in particular that struck me – let me call him Jack, the AVP of FM. Jack has been using a Fraud Management System(FMS) for close to 4 years & has certain business challenges to address. Jack was exploring the option of upgrading the FMS to tackle the challenges and needs.

Jacks’ primary challenge was to build a business case justification for FM upgrade to show the elusive RoI. Apparently, there have been challenges of his team detecting fraud, and he was of the belief that an upgrade will help address the fraud in new generation of services (which the current system is not capable of) and thus contribute to the RoI.

On probing further it was clear that he’s been under tremendous pressure from the higher-ups to showcase RoI, especially in the recent past due to tough macro-economic conditions. Some more questioning and discussions with his team members revealed that there have been (and are) many hurdles in performing their tasks –

a) IT issues pertaining to system availability, performance, processing & tuning

b) Knowledge issues in fine-tuning the rules and thresholds periodically

c) People issues in understanding the domain and carrying out effective and smart investigations

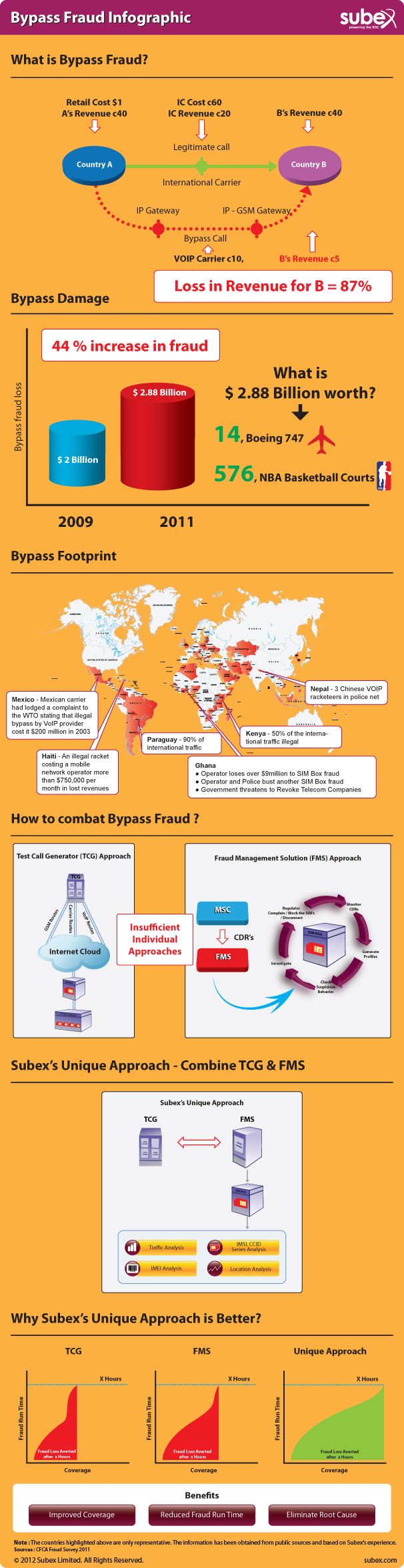

While the operator is on a drive to introduce Next Generation services, more than 88% of the revenue still comes from traditional services – Voice, SMS/MMS, Roaming, Interconnect and GPRS. The top frauds also happen in these areas – https://www.cfca.org/fraudlosssurvey/

It was a revelation for Jack when the data was put up for discussion. It was also evident that an upgrade alone is not going to solve his problem. The need of the hour was an overhaul of the entire eco-system (address the 88%), along with the upgrade (to address the remaining 12%).

During the course of the discussion, I suggested a few best practices based on prior experience through the Managed Services engagements.

a) Conduct an assessment to baseline the performance of the current function, including a SWOT analysis and detailing a roadmap for growth

b) Basis assessment, build a business case for justification for skills/efficiency improvement and required technological upgrades

c) As next step, strengthen the foundation of fraud prevention by improving on people, process by leveraging on best practices and experience from vendors and partners if needed

d) Once the basics are addressed, mature to the next level by incorporating technological, process, procedures & skill upgrades

I also quoted one such Subex Managed Services engagement in India, where the operator was on an older version of the Fraud Management when the engagement started and has seen more than 2 times RoI within a year, followed by an upgrade to latest version. This helped them in the following ways:

a) Optimize the resources as a first step and improve on fraud operations through skilled workforce, leveraging on existing technology and automation of repeatable tasks

b) This resulted in significant financial savings, lowered operational and workforce risks, improved knowledge and enhanced business agility

c) The highly scalable model for future growth also meant they were able to choose specific fraud related services and technological upgrades depending on its strategic objectives and business priorities

Jack, being the positive person, was able to appreciate a new perspective on his challenges and is looking forward to a detailed assessment to build a case for strengthening the FM team.

After this incident, I wonder are there more Jack’s out there with the right intention but not necessarily armed with the right tools?